Generative AI was top-of-mind for NVIDIA at the computer graphics conference SIGGRAPH on Tuesday, Aug. 8. A Hugging Face Training Service powered by NVIDIA DGX Cloud, the latest version of NVIDIA AI Enterprise (4.0), and the AI Workbench toolkit headlined the announcements about enterprise and industrial generative AI deployments.

Jump to:

- ‘Superchip’ with HBM3e processor supports AI development

- NVIDIA DGX Cloud AI supercomputing comes to Hugging Face

- AI Enterprise 4.0 revealed

- NVIDIA brings the entire gen AI pipeline in-house with AI Workbench

- New RTX workstations and GPUs support generative AI for enterprise

- Omniverse embraces OpenUSD for digital twinning

‘Superchip’ with HBM3e processor supports AI development

The next installment in the Grace Hopper platform line will be the GH200 built on a Grace Hopper ‘superchip,’ NVIDIA announced on Tuesday. It consists of a a single server with 144 Arm Neoverse cores, eight petaflops, and 282GB of memory running on the HBM3e standard. HBM3e delivers 10TB/sec bandwidth, NVIDIA said, an improvement of three times the memory bandwidth as the previous version, HBM3.

“To meet surging demand for generative AI, data centers require accelerated computing platforms with specialized needs,” said Jensen Huang, founder and CEO of NVIDIA, in a press release. “The new GH200 Grace Hopper Superchip platform delivers this with exceptional memory technology and bandwidth to improve throughput, the ability to connect GPUs to aggregate performance without compromise, and a server design that can be easily deployed across the entire data center.”

NVIDIA DGX Cloud AI supercomputing comes to Hugging Face

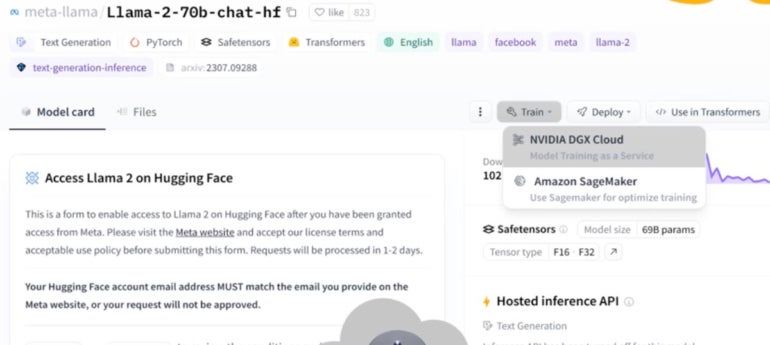

NVIDIA’s DGX Cloud AI supercomputing will now be available through Hugging Face (Figure A) for people who want to train and fine-tune the generative AI models they find on the Hugging Face marketplace. Organizations wishing to use generative AI for highly specific work often need to train it on their own data, which is a process that can require a lot of bandwidth.

Figure A

“It’s a very natural relationship between Hugging Face and NVIDIA, where Hugging Face is the best place to find all the starting points, and then NVIDIA DGX Cloud is the best place to do your generative AI work with those models,” said Manuvir Das, NVIDIA vice president of enterprise computing, during a pre-briefing for the conference.

DGX Cloud includes NVIDIA Networking (a high-performance, low-latency fabric) and eight NVIDIA H100 or A100 80GB Tensor Core GPUs with a total of 640GB of GPU memory per node.

DGX Cloud AI training will incur an additional fee within Hugging Face, though NVIDIA did not detail what it will cost. The joint effort will be available starting in the next few months.

“People around the world are making new connections and discoveries with generative AI tools, and we’re still only in the early days of this technology shift,” said Clément Delangue, co-founder and CEO of Hugging Face in a NVIDIA press release. “Our collaboration will bring NVIDIA’s most advanced AI supercomputing to Hugging Face to enable companies to take their AI destiny into their own hands with open source.”

SEE: We dug deep into generative AI – both the good and the bad. (TechRepublic)

AI Enterprise 4.0 revealed

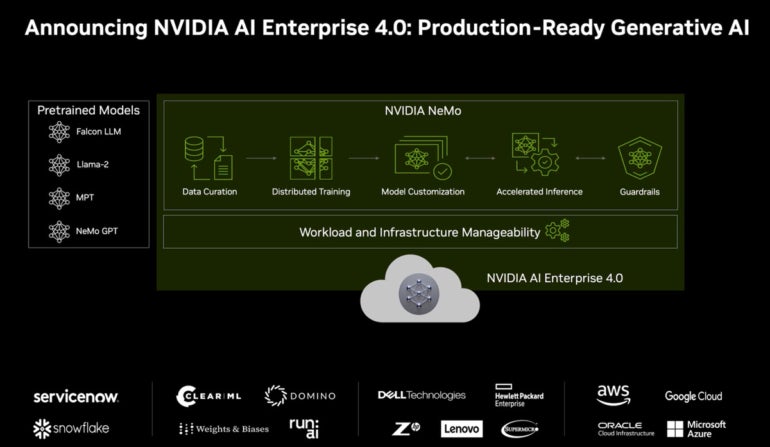

NVIDIA’s AI Enterprise, a suite of AI and data analytics software for building generative AI solutions (Figure B), will soon shift to version 4.0. The major change in this version is the addition of NeMo, a platform for custom tooling for generative AI curation, training customization, inference, guardrails and more. NeMo brings a cloud-native framework for building and deploying enterprise applications that use large language models.

Machine learning providers ClearML, Domino Data Lab, Run:AI and Weights & Biases have partnered with NVIDIA to integrate their services with AI Enterprise 4.0.

Figure B

NVIDIA brings the entire gen AI pipeline in-house with AI Workbench

AI Enterprise 4.0 pairs with NVIDIA AI Workbench, a workspace designed to make it easier and simpler for organizations to spin up AI applications on a PC or home workstation. With AI Workbench, projects can be easily moved between PCs, data centers, public clouds and NVIDIA’s DGX Cloud.

AI Workbench is “a way for you to uniformly and consistently package up your AI work and move it from one place to another,” said Das.

First, developers can bring all of their models, frameworks, SDKs and libraries from open-source repositories and the NVIDIA AI platform into one space. Then, they can initiate, test and fine-tune the generative AI products they make on a RTX PC or workstation. They can also scale up to data center and cloud computing hosting if needed.

“Most enterprises are building the expertise, budget or data center resources to manage the high complexity of AI software and systems,” said Joey Zwicker, vice president of AI strategy at HPE, in a press release from NVIDIA. “We’re excited about NVIDIA AI Workbench’s potential to simplify generative AI project creation and one-click training and deployment.”

AI Workbench will be available Fall 2023. It will be free as part of other product subscriptions, including AI Enterprise.

New RTX workstations and GPUs support generative AI for enterprise

On the hardware systems side, new RTX workstations (Figure C) with RTX 6000 GPUs and AI-supporting enterprise software built in were announced. These are designed for the large GPU power requirements needed for industrial digitalization or enterprise 3D visualization.

The newest members of the Ada workstation GPU family will be the RTX 5000, RTX 4500 and RTX 4000. The RTX 5000 is available now, with the RTX 4500 and RTX 4000 available in October and September 2023 respectively.

In a similar vein, new architecture for OVX servers was announced. These servers will run up to eight L40S Ada GPUs each, and are also compatible with AI Enterprise software. All of these workstations are appropriate for content creation such as AI-generated images for graphic design, animation or architecture.

Figure C

“With the performance boost and large frame buffer of RTX 5000 GPUs, our large, complex models look great in virtual reality, which gives our clients a more comfortable and contextual experience,” said Dan Stine, director of design technology at architectural firm Lake|Flato, in a NVIDIA press release.

Omniverse embraces OpenUSD for digital twinning

Lastly, NVIDIA detailed updates to Omniverse, a development platform for connecting, building and operating industrial digitalization applications with the 3D visualization standard OpenUSD. Omniverse has applications in 3D animation and game development as well as in automotive manufacturing. Many of the updates were connected to NVIDIA’s new partnership with Universal Scene Description, an open source format for the creation of objects and other elements in 3D graphics.

“Industrial enterprises are racing to digitalize their workflows, increasing the demand for connected, interoperable, 3D software ecosystems,” said Rev Lebaredian, vice president of Omniverse and simulation technology at NVIDIA, in a press release.

Several new integrations for Omniverse enabled by USD will be available including one with Adobe’s AI image generation application, Firefly.

Companies using Omniverse for industrial design include Boston Dynamics AI Institute, which uses it to simulate robotics and control systems, NVIDIA said.

The newest version of Omniverse is now in beta and will be available to Omniverse Enterprise customers soon.